Difference between revisions of "Wikidocumentary"

Jump to navigation

Jump to search

(→Interactivity) |

(→Different editor views) |

||

| Line 51: | Line 51: | ||

Map editor.png|Map/frame editor | Map editor.png|Map/frame editor | ||

</gallery> | </gallery> | ||

| + | ===Timeline editor=== | ||

| + | ===Text editor=== | ||

| + | ===Collection editor/organizer=== | ||

| + | ===Map/frame editor=== | ||

| + | ===Voiceover recorder=== | ||

==Mobile== | ==Mobile== | ||

Revision as of 08:54, 4 November 2021

Wikidocumentary is is a layered, time-based presentation that comprises of simple elements, and it can be viewed in a few different ways.

Contents

What would a wikidocumentary look like?

Interfaces

- Web interface, page layout: Scrolling or clicking between frames. Maybe the user can change the layout and mode?

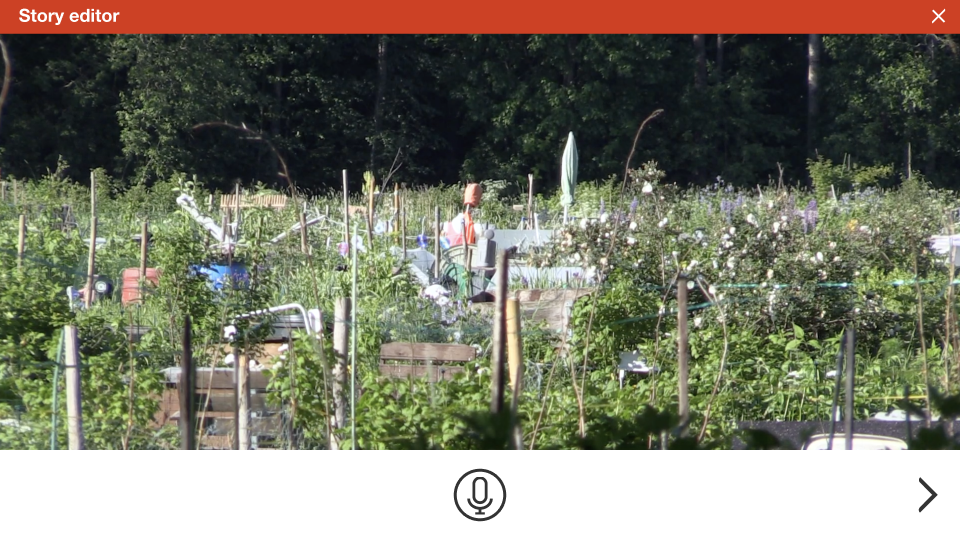

- Sit back cinema (auto-advance), full-screen, timed

- Mobile orienteering mode. Frames are located in places where it's possible to enter. There must be flexibility in the timelines to allow for different transition times. Use soundscapes and other media loops, trigger frames by proximity etc.

Elements

Frames

Frames are the building blocks of a wikidocumentary.

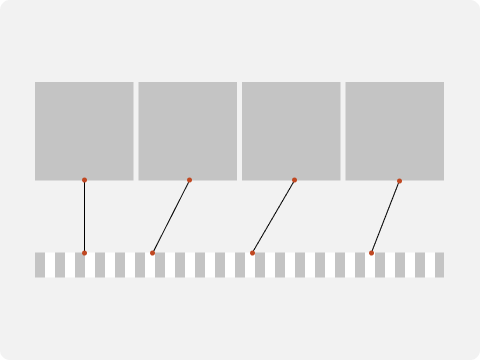

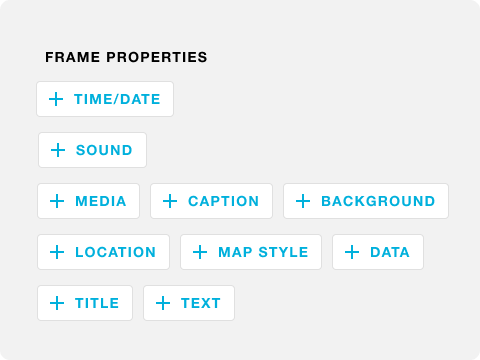

Frame properties

- Image or video. Later, multiple media files and an image sequence may be made possible.

- Captions

- Frame audio can be a soundscape or a loop / generative sound to be played while user is in the frame. It can be any sound that plays only once.

- Frame title and text. Possibly rich text with more images. Links.

- Background

- Location. Each frame can be depicted by a coordinate location. This can be used in different ways. It can be plotted on a map or it is a location to go to to experience AR content.

- Map, with on opportunity to change the style

- Background overlay layer Georeferenced imagery can be used as a background layer. It can be an old map, aerial image, aerial video, or alternatively a large zoomable image. It can also be a video overlay.

- Data overlay can be geographic data plotted on the map or annotations on an image or a map. A data point in a time series can be the basis for a frame.

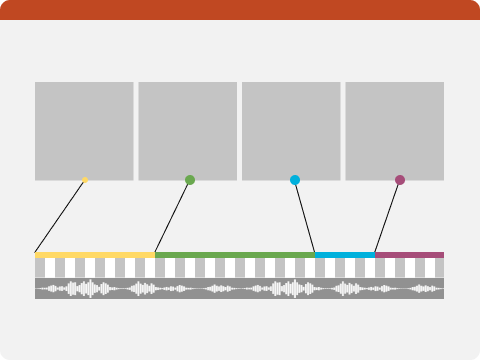

Timeline

- Audio track. The audio track can be a narrative, a musical piece, or an ambient recording for example. Frames are anchored on the timeline. It can be an audio file or the audio track of a video file, or the audio can be recorded in the app.

- Video

- Time series data (Wikidata query, file). Examples include travel route, any animated thematic, statistical map.

- Transitions: Soundscapes, specific content. Data animations run and set the pace of the interval.

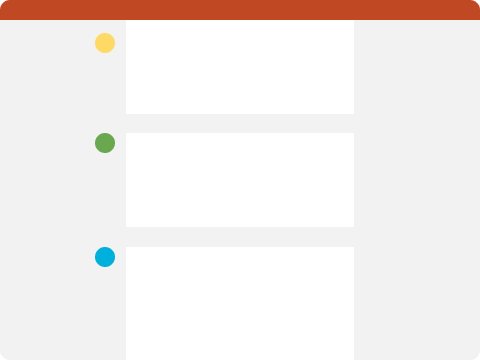

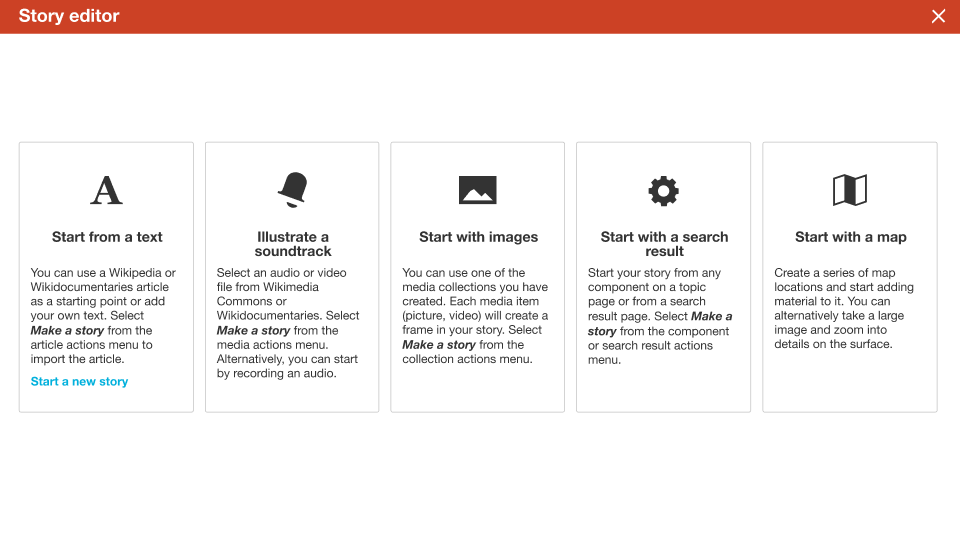

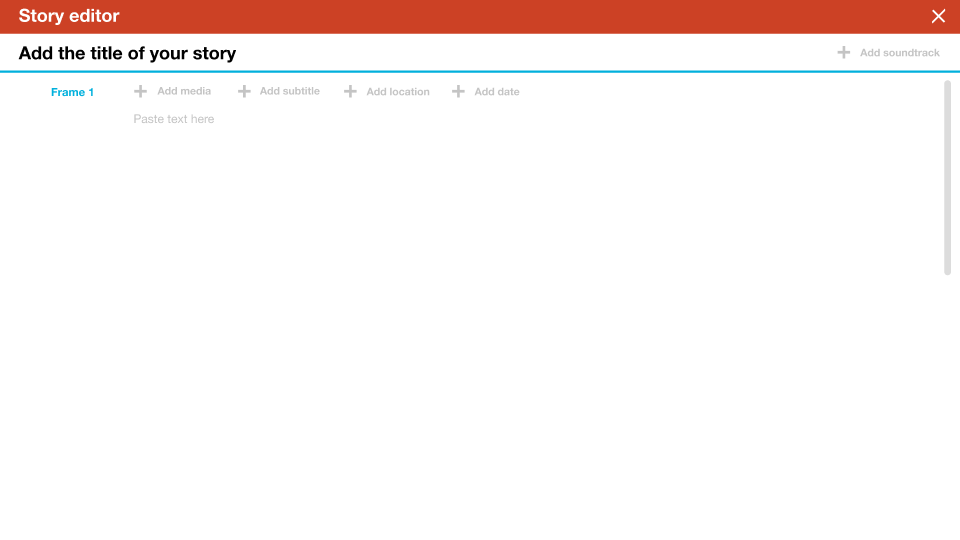

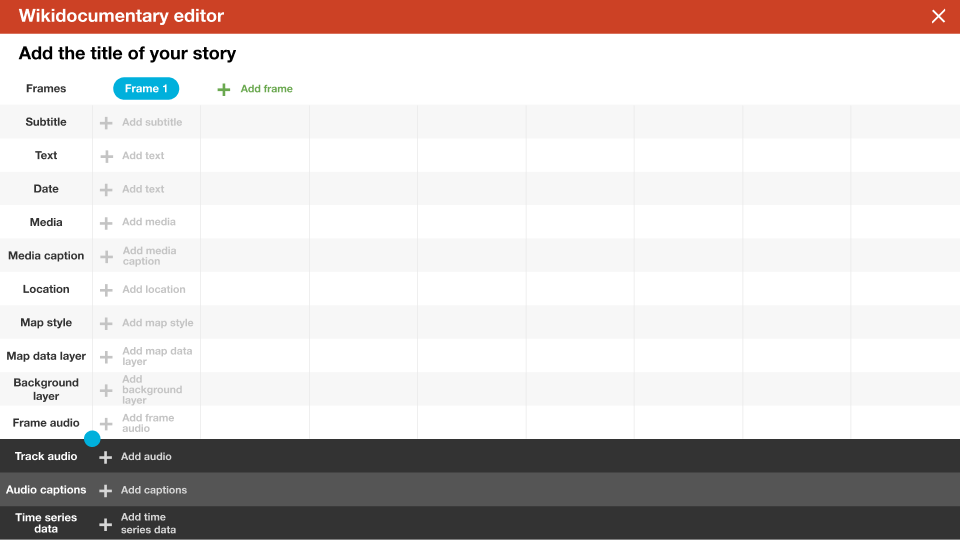

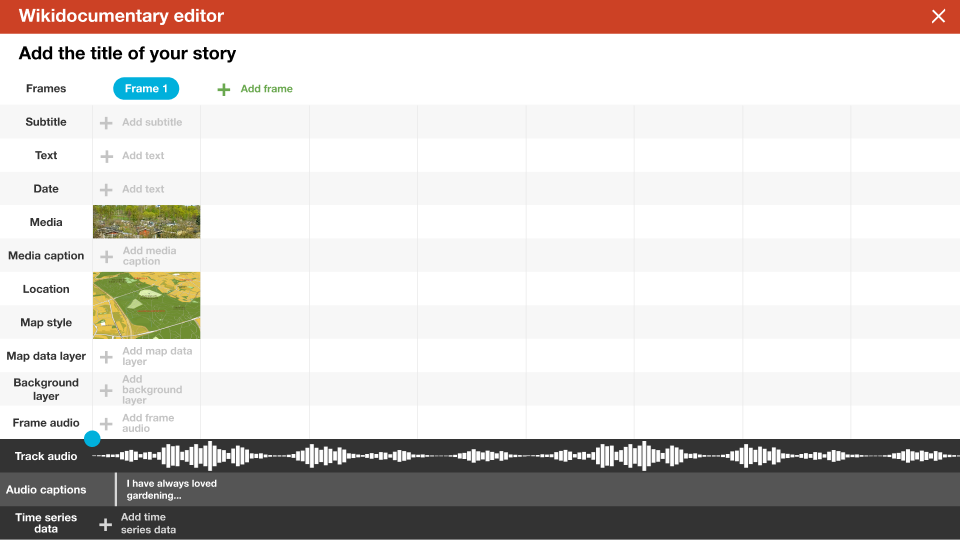

Different editor views

Timeline editor

Text editor

Collection editor/organizer

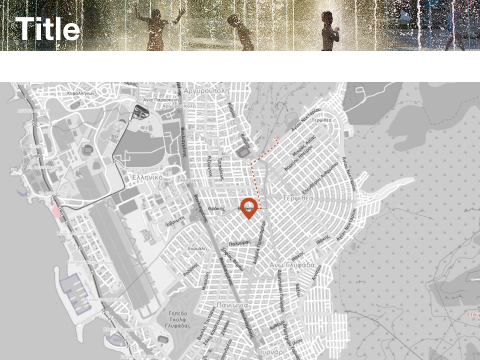

Map/frame editor

Voiceover recorder

Mobile

- Different screen estate

- Possibility to easily record images, sound, video or location

- Possibility to connect to other contributors or share

Using AR

- The map background is used in navigating between frames. Frames can be accessed in random order.

- The frames can display AR content. Ideas?

Interaction

- Things that the user can be asked to do: Take pictures, record a fact, record audio...

- Wikidocumentaries as gradually evolving presentations: Users can add an aspect to an existing wikidocumentary.

Map-based wikidocumentary -- This has not been cleaned from outdated information

Transferred from Map

Based on frames that consist of several parameters

- Coordinate location

- View angle

- Time value

- Visual media

- Image plane and view angle for overlays (on the map background, in 3D space)

- Medium (video, image, image series, image pair)

- Headline & subheader

- Text

- Hyperlinks?

- Media

- Audio

- Fixed timeline throughout presentation, such as a narrative

- Fixed timeline over one frame

- Soundscape loops or generative soundscapes

- Effects, synchronized sound

- Map settings

- Map style

- Animations

- Layers

- Timeline display

Display options

Map

- Map is the base element

- Each frame represents a point from which the map is seen

- Map settings are different for each map frame

- An aerial image/video/illustration may overlay the map

- A media element may be added to the 3D view

- An image pair can be viewed with a revealing slider

- Map remains controllable in frames

Text

- Text can be scrollable including parallax elements.

- Media can be used as part of layout in the text stream. Media can be expanded to cover the full screen.

- Transition from frame to the other may also different, such as a crossfade between media elements.

Audio

Locative elements

- Frame display triggered by GPS info

- Dynamic output

- soundscape, colours, alternative content, nearby geotagged content

- depending on data

- proximity to a coordinate location

- weather data

- time of day, time of year...

User-generated

- Interface to create frames and configure settings

Generated

- AI-assisted frame generation from Wikipedia articles

- User-enhanced in the same interface

To think

- Animations of time-series, how do they relate to frames? Can frames be marked as time intervals, or points in time?

- Can the wikidocumentaries be multidimensional, including for example temporal depth across its own timeline.

Old graphics

| About | Technology | Design | Content modules | Tool pages | Projects |

| Status

Wikidocumentaries Slack |

Setting up dev environment |

Components |

Active modules Module ideas |

Visual editor | Central Park Archives |